How to AI?

Why your bottleneck matters more than your model

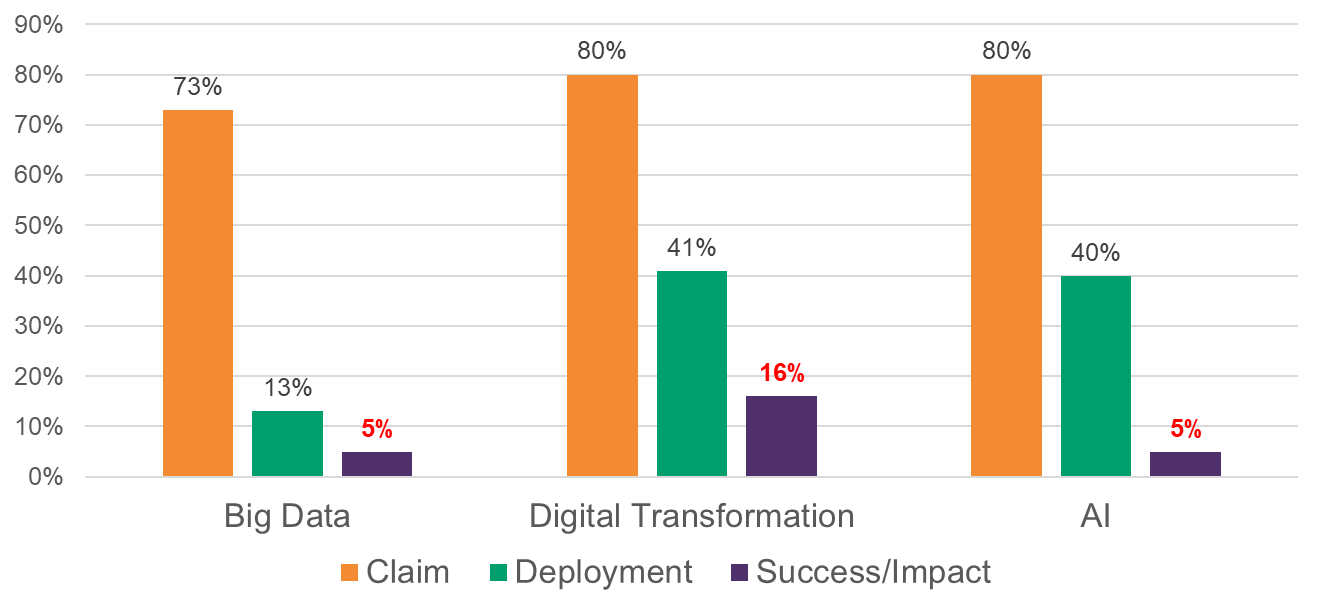

I am often asked to speak on geopolitics in many forums. Inevitably, the talk leads to China v US, the rise and fall of superpowers, and the AI race. I usually stick to my lane and talk about geopolitical implications of shifts in trading blocs and alliances, export controls, the slow decoupling of the US and China, and differential paths the two countries are taking (building god-in-a-box vs. applications in advanced manufacturing). Inevitably, there is also a panel on AI that I make a point to attend. But I leave feeling frustrated that I learned very little, while the audience exhibits extreme FOMO. Well, they should all take a look at the graph below, which shows claims or plans for deploying a technology, the actual deployment, and measured success and impact, based on surveys by Gartner, McKinsey, and Deloitte.

“We use big data” → I have a big Excel sheet with many links, some circular.

“We are doing digital transformation” → we use Dropbox/Cloud

“We AI” → “I built a Chatbot and put it on my website.”

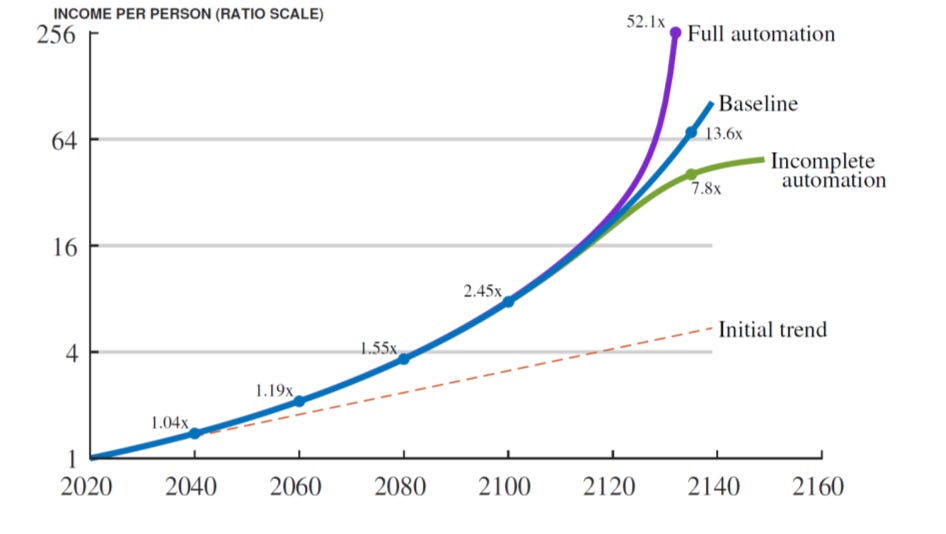

Yes, organizations are messy with legacy systems and processes, which always makes diffusion slower than aspired to. And AI may be a normal technology, so its impact on productivity and income may take decades. See Jones and Tonetti, who simulate the future - full automation can be transformative, but even if AI makes most tasks extremely productive, output is still pinned down by the shrinking set of tasks where human labor remains essential. That means the economy can be racing ahead on many margins, yet aggregate GDP per person moves only modestly above trend for quite a while.

More succinctly, in all three worlds, machines are improving faster than humans, but the remaining human tasks still matter a lot because production has weak links. So let us talk of weak links - what on earth is that?

The O-Ring Problem

In January 1986, the space shuttle Challenger broke apart 73 seconds into its tenth flight. The shuttle was extraordinarily sophisticated - thousands of components, decades of accumulated engineering, the most ambitious flight program of its time. The investigation concluded that the failure came down to something very small. A pressure seal, called an O-ring, in one joint of one solid rocket motor. The morning was unusually cold, the rubber stiffened, and the seal failed.

Michael Kremer, who later won the Nobel Prize in 2019, formalized this idea in a 1993 paper titled The O-Ring Theory of Economic Development. Production depends on many tasks being done right. Failure in one critical task can destroy or sharply reduce output. The lesson is not that small things matter. The deeper lesson is that in certain systems, performance is governed not by your strongest component, but by your weakest critical one. This is the right starting point for thinking about AI. Questions about valuations, whether we are in a bubble, and dodgy depreciation schedules (see Michael Burry) all come later.

Once you take that seriously, the next question becomes: if one input falls short, say in production, can I compensate by using more of something else? That is what economists call the elasticity of substitution, a concept dating back to 1932. It asks a simple question

If one input becomes scarce or expensive or weak, how easily can we compensate by using more of another input?If you can easily replace one input with another, the system is flexible. If you cannot, the inputs are complements. And then you get bottlenecks.

A model would help think about this. Now, your instinct may be to look skeptically at models and esoteric concepts. Well, the alternate is consuming self-selected examples with a sample size of 1 in many cases, or going to conference after conference on AI with this nagging suspicion that something is afoot, but also, what on earth am I supposed to do? If you believe AI is a transformative technology, then the future is highly uncertain and unknowable, and disciplined thinking, using models is your only option. As an aside, I also find it funny that everyone wants to use AI models, but if you flash a model, they have a conniption and say models are useless and unrealistic.

Huh?

In 2017, two Oxford economists, Carl Frey and Michael Osborne, published a paper that became famous for putting probabilities on the automation of every occupation in America. Accountants and auditors: 94% probability of automation. Bookkeeping clerks: 98%. Twelve years later, the US Bureau of Labor Statistics counts 1.6 million accountants and auditors, up from 1.25 million in 2013. The occupation is projected to grow by another 5% through 2034 — faster than the average for all jobs. Bookkeeping clerks, on the other hand, are falling by about 6% over the same horizon. Both were classified as highly automatable. Yet their labor market trajectories diverged.

Geoffrey Hinton famously said, “People should stop training radiologists now,” believing it was “completely obvious” AI would outperform human radiologists within five years. “If you work as a radiologist, you’re like the coyote that’s already over the edge of the cliff but hasn’t yet looked down.” Fast forward to today, and the prediction has proven far from prescient - they are in greater demand at higher salaries.1

What did these forecasts miss? Not the technology. The arithmetic of automation has worked out roughly as predicted; software does increasingly handle ledger reconciliation. AI does well at specific image-recognition subtasks within radiology. What the forecast missed is that jobs are not a sum of separable tasks. It is a multiplicative bundle. Consider an accountant - she interprets tax law for a specific client, signs the audit that the bank and the SEC will rely on, and carries the legal exposure when something goes wrong. Strip out the spreadsheet drudgery and the bundle stays. Strip it out of the bookkeeping clerk’s job, and very little is left. Similarly, radiologists’ jobs are more than just reading MRIs and span clinical history, prior comparisons, multimodal data, and patient context.

Klarna from last year is the corporate version of the same lesson2. In 2024, the Swedish payments company announced it had replaced roughly 700 customer service representatives with an AI agent and would no longer hire humans. Fifteen months later, they were quietly hiring people again, because the customer experience had degraded in ways the spreadsheets had not predicted. The bundle of empathy, escalation, and brand was tighter than the task list suggested. It must be slightly mortifying to deliver a productivity miracle in one quarter and a customer-service apology by the next.

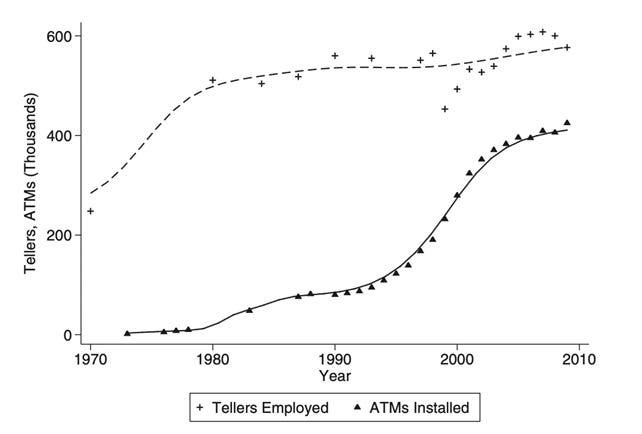

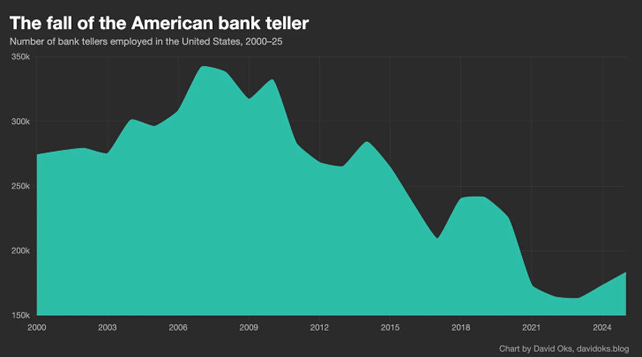

There is a separate question of whether AI ends up *creating* new tasks and new jobs even as it eliminates old ones — the bank-teller story is the classic example, where ATMs cut tellers per branch but lower per-branch costs led to more branches and rising total teller employment for a quarter-century (see below). Daron Acemoglu and Pascual Restrepo have developed a careful framework around this “reinstatement effect”: how new technologies create new tasks and roles where human labor holds a comparative advantage, counterbalancing the job-displacing effects of automation.

In 2007, the iPhone was introduced and…

Mobile banking helped erode the branch model itself, especially after the financial crisis and a wave of bank consolidation. That is a different mechanism, and I will return to it in a future post.

A Simple Task-Based Model

AI forecasts and managers start with these questions

‒ Can AI replace this job? (a little naïve, but the iPhone did this)

‒ Can AI do this task? (more sophisticated)

‒ How will AI impact the workflow? (far more sophisticated)

But a job is a bundle of tasks + responsibility + coordination + accountability, so AI can automate a task without automating the job. Luis Garicano, in a recent essay, builds on this idea that the task is not the job. Travel agents lost the booking task — total agency employment is now 60% below its dot-com peak. But the agents who survived moved upmarket, charged planning fees, joined luxury consortia, and now earn 99% of the private-sector average wage, up from 87% in 2000. The machine took the weak part of the bundle. The humans kept the strong part.

In his formulation, jobs can either be a “weak bundle” where tasks can be separated cheaply (travel booking; basic bookkeeping) or a “strong bundle” where a task cannot be separated cheaply from judgment, liability, or context (accountant; lawyer; C-suite). In a weak-bundle, AI will either narrow the job or even eliminate it. In a strong bundle, the AI augments the worker by either taking over certain tasks (making them more efficient) or replacing some tasks in the workflow (the accountant has the AI handle bookkeeping).

Consider a simple model with output flowing from a sequence of tasks. Each task, has a probability q of being done correctly. Most think tasks are additive, so we can easily replace one task with an AI. Something like

Think of replying to 100 customer emails. Each reply stands on its own and adding AI to the workflow or replacing a task with AI is a straightforward productivity gain. Here ask whether AI can do a task independently and whether you can replace a human with AI. The constraint is the cost of AI and investment in automation and scale translates into real productivity gains. Note that in such a linear additive world, where one task can replace another at a constant rate, the elasticity of substitution is infinite and tasks are perfect substitutes for one another. That is a very strong assumption.

But in many instances, the output is determined by the weakest and most critical component. This is the extreme O-ring version (called Leontief), where output equals the minimum of the inputs or tasks (q₁, q₂, etc.), with tasks as extreme complements.

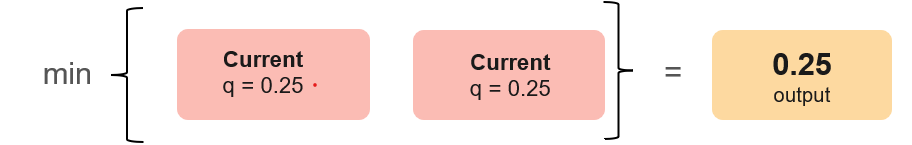

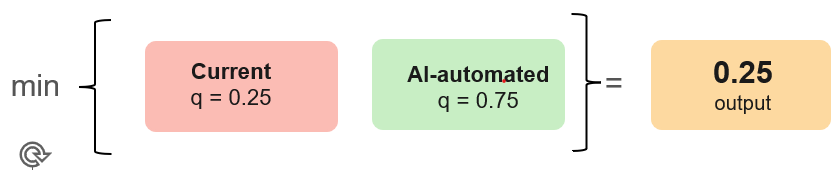

A rocket launch. A surgical procedure. A regulatory filing. Miss one step and the whole thing is gone, and a better engine on the rocket buys you nothing if the seal fails. Here, the elasticity of substitution is zero, and the weakest task sets the ceiling. Automating one or more tasks will not improve output or productivity if another task remains a bottleneck. See a simple example below.

So ask yourself: am I in an extreme O-ring world, where a single failure breaks the entire workflow? If yes, better AI buys you near-zero gains, and hallucinations are catastrophic. The work is sequential and unglamorous — identify the binding constraint, fix it, then go find the next one. Over time you do end up upgrading every layer — data, workflow, infrastructure, talent, compliance, governance — but only one at a time, and the order matters. Most firms have neither the diagnostic apparatus nor the patience for that. So instead they jabber about AI, parachute in a chatbot, monitor how many employees are "using AI tools," and remain puzzled why none of it shows up in the productivity numbers.

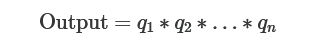

A less extreme but more realistic version is one in which tasks are, in a sense, multiplicative (as used in the Kremer paper).3

A book is content × editing × cover design × distribution. A great manuscript with no distribution is a sad manuscript. A programmer’s code that partially works is unusable. A cook’s recipe is not dinner, and you cannot substitute salt with sugar. An HR executive’s screening process is wasted if onboarding, manager quality, and incentives are not effective. A CTO writes code, manages teams, and talks to the board, and the most brilliant code in the world will not save them if the board has lost confidence. Here is an example of what could happen

You automate one task where using AI nearly doubles task quality (q rises from 0.5 to 0.9). But the workflow change makes the complementary task harder and its quality deteriorates (q falls from 0.5 to 0.2). In an additive world, output would rise from 1.0 to 1.1. In a multiplicative world, output declines sharply by 28%. How can this happen? As you shrink and automate routine tasks, the remaining tasks become harder, not easier. It requires more attention to exception-handling, deeper judgment, and more stringent accountability. The returns to whoever does that surviving task go up, not down.

Now assume Task B does not deteriorate and stays at 0.5. Output rises modestly to 0.45 (0.9 x 0.5). That is a real gain - but notice the ceiling. Even a perfect, non-hallucinating AI on Task A, with q = 1, only takes output to 0.5 (1.0 x 0.5). The human-intensive task is the binding constraint, and AI cannot get you past it. So in a multiplicative world, automation and augmentation can help, sometimes a lot, but the slowest-improving critical task caps the returns from AI. At least in today's world.

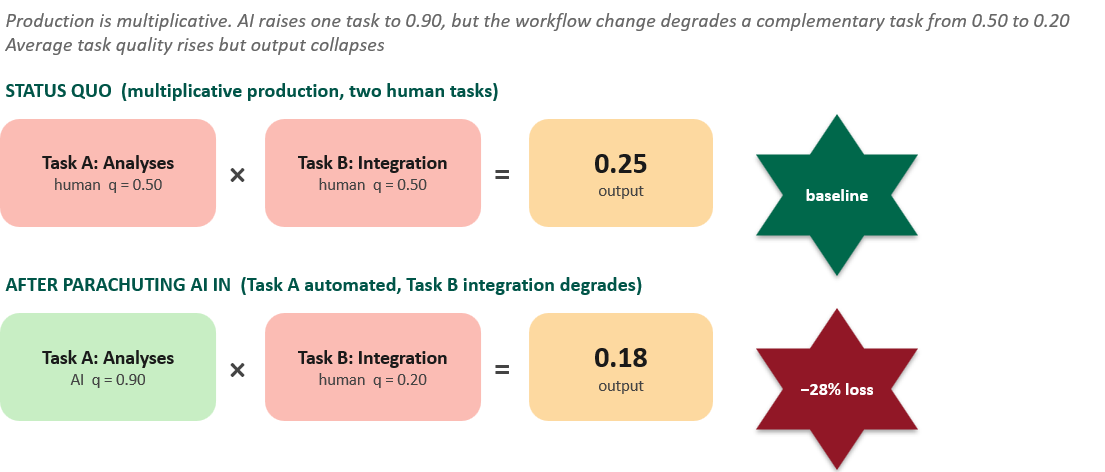

AI productivity looks like

“How much should we AI” is only the first question. A more persistent question is “What are the binding constraints that will prevent us from scaling AI inside the firm?” In practice, the constraint is rarely the model or even talent. It is one of:

Data quality and accessibility (you have data but it is scattered across 12 systems, and one spreadsheet is called “final_final_v7”)

Integration with legacy systems (data is technically digital but some are scanned images, others are old faxes in a dusty folder in the basement of a person who retired in 2019)

Workflow and org design (the analyses is instantaneous; the procurement committee still meets on Tuesdays)

Trust in outputs (the model is right often enough to be useful but hallucinates enough to terrify Legal)

Incentives, decision rights, and liability (here comes GDPR)

Most firms are approaching AI as if it were a standalone technology. The questions getting asked: how many pilots do we have, how many models are we deploying, how many licenses, how many employees are using generative AI for coding. These are not useless metrics, but they are not the right ones if the real problem is bottlenecks, constraints, and complementarity. They tell you whether AI is in the building. They do not tell you whether the firm’s production function has changed.

That is why so much of the AI conversation feels strangely unsatisfying to me. The conference panel tells you “AI is here”. The vendor demo tells you that “the model is magical”. The CEO tells you that “everyone is experimenting”. Few tell you where the bottleneck is.

Until firms can answer that question, they will keep mistaking adoption for transformation. They will buy the tools, launch the pilots, count the prompts, celebrate the use cases, and then wonder why the productivity numbers refuse to move.

Another lesson is that god-level competency in one domain does not translate into competencies in others. Elon Musk & Doge, Mark Zuckerberg & education, Jeff Bezos & healthcare.

FT and BCG have documented similar reversals at retailers and contact-centre operators across 2024–25.

Here, the elasticity of substitution is one. If it is less than 1, then tasks are complements. If greater than one, they are substitutes. If infinite, they are perfect substitutes.

I agree that automating a task, or even a collection of tasks, is not the same as automating a job. Jobs often sit on top of tasks and require human affordances such as context, responsibility, judgment, liability, and coordination.

I also agree that bottlenecks are a pragmatic route for quick wins. But I would be careful not to limit the AI opportunity space only to bottlenecks that are already visible and measurable. As the Solow productivity paradox put it, “You can see the computer age everywhere but in the productivity statistics.” Not everything that counts can be counted, and not everything that can be counted counts.

The risk is that firms focus only on measurable workflow bottlenecks while leaving a deeper “organization design bottleneck” outside the frame: could the organization itself be redesigned differently around AI?

In complex adaptive systems, the better approach may be to preserve ambiguity rather than prematurely collapse it into measurable bottlenecks. Firms need to ride both routines at once: exploitation, by using AI to improve visible constraints in existing workflows; and exploration, by asking whether AI makes entirely different organizational designs possible.

If incumbents restrict exploration to existing processes and measurable constraints, they may fall prey to the myopia of learning described by Levinthal: improving locally within the current architecture while missing the possibility of a different one. In that case, new organizations may have a structural advantage, because they can explore AI-enabled designs without the same legacy constraints.

Fascinating read...the key point is that AI should be used to reimagine workflows, rather than be deployed just for incremental improvements. Such an approach will help identify the bottlenecks(am reminded of the Theory of Constraints that Goldratt explained so well in his book, 'The Goal' and how improving system flow requires identifying and managing these constraints rather than optimizing every individual machine). If we take this as a basis, then successful implementation of AI should result in a 'improved system flow=workflow', rather than apply AI only at a task level...